Hello and welcome to the twenty-fourth edition of Linux++, a weekly dive into the major topics, events, and headlines throughout the Linux world. This issue covers the time period starting Friday, September 11, 2020 and ending Monday, September 28, 2020.

This is not meant to be a deep dive into the different topics, but more like a curated selection of what I find most interesting each week with links provided to delve into the material as much as your heart desires.

If you missed the last report, Issue 23 from September 10, 2020, you can find it here. You can also find all of the issues posted on the official Linux++ Twitter account here or follow the Linux++ publication on the Destination Linux Network’s Front Page Linux platform here.

In addition, there is a Telegram group dedicated to the readers and anyone else interested in discussion about the newest updates in the GNU/Linux world available to join here.

For those that would like to get in contact with me regarding news, interview opportunities, or just to say hello, you can send an email to linuxplusplus@protonmail.com. I would definitely love to chat!

There is a lot to cover so let’s dive right in!

Community News

GNOME 3.38 “Orbis” Has Been Unleashed!

After an impressive release earlier this year in March, the GNOME team is back at it with the release of the next iteration of the most popular desktop environment and application ecosystem for Linux and it looks like there is quite a bit to be excited about! Of course, if you are on a Debian or Ubuntu-based distribution, you might have to wait (at least until 20.10 in October) until you can try out the new GNOME 3.38. However, the GNOME developers have added a nice little feature to their popular virtualization application, Boxes, to allow anyone to try out the new features right now!

Yep, there is now a pure GNOME 3.38 image going by the name GNOME OS, which is intended for developers to test out their code with the most up-to-date versions of GNOME, but anyone can give it a run–as long as you have GNOME Boxes installed. Pretty cool, huh?

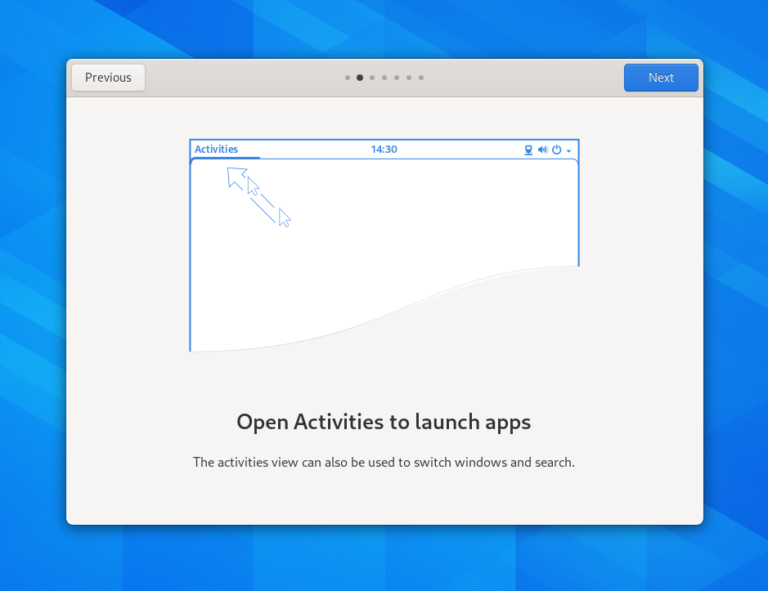

One area that is tremendous in 3.38 is the inclusion of a new “Welcome Tour” application that has honestly been needed for far too long. One of the biggest issues plaguing GNOME 3 is the drastically different layout and workflow of the desktop compared to the more traditional designs that people are familiar with–namely Windows and macOS. This has certainly been a struggling point when attempting to recommend many awesome distributions that ship GNOME by default to new users as the streamlined design could easily turn people off from Linux without any help or context.

Thankfully, the GNOME team has put in some awesome work with their Welcome Tour application, which is automatically launched upon first boot of the system and will undoubtedly make the transition from Windows, macOS, or another popular desktop environment so much smoother. Oh yeah, it’s also written in Rust, so bonus points there, GNOME Team!

Another major feature integrated into the GNOME Shell is the ability to customize the application grid overview. Previously, the application grid had an All or Frequent option that could be toggled. With 3.38, the GNOME Team has removed the option and instead allows the application grid to be customized by the user themselves. So, instead of having applications listed alphabetically, it is now possible to move your most frequently used applications to the front of the list (or anywhere for that matter) for easier launching. In addition, groups are dynamically created by dragging the icon of a program directly on top of another. The position of applications inside of groups are now completely customizable, just as the application grid is itself.

As far as the core functionality of GNOME Shell, the settings received a lot of love with this release cycle. The main highlight involves the ability to manage parental controls for non-root privileged users on a computer via a new Parental Controls option under the Users tab. This functionality gives privileged users the ability to filter certain applications from the application overview menu, prevent applications from being lunched, and even allows for choosing which applications can be physically installed by a user through integration with the existing software application restriction options.

Another big addition revolves around a massively growing functionality that is being found on more and more hardware every year–the fingerprint reader. The GNOME Team built a new settings option that allows for the recording of a user’s fingerprint to replace the action of typing in a password. Moreover, protection was added to disallow unauthorized USB devices to connect when the screen is locked and there is now an option to show the battery percentage in the Shell itself.

Both screen recording and multi-monitor support have gained some massive improvements this round, though the multi-monitor support was work done only for Wayland sessions. In addition, the option to restart the system now has it’s own separate entry in the Power Off/Log Out drop down menu instead of only being reachable through the Shut Down dialog window.

Moreover, the ability to share a Wi-Fi hotspot across devices has been implemented through the use of QR codes. This is some really cool functionality that can allow you to very easily turn your computer into a Wi-Fi hotspot for other devices. The QR code can be found in the Wi-Fi panel in the settings dialog and all it takes is a quick snap to get up and connected. Pretty cool, indeed!

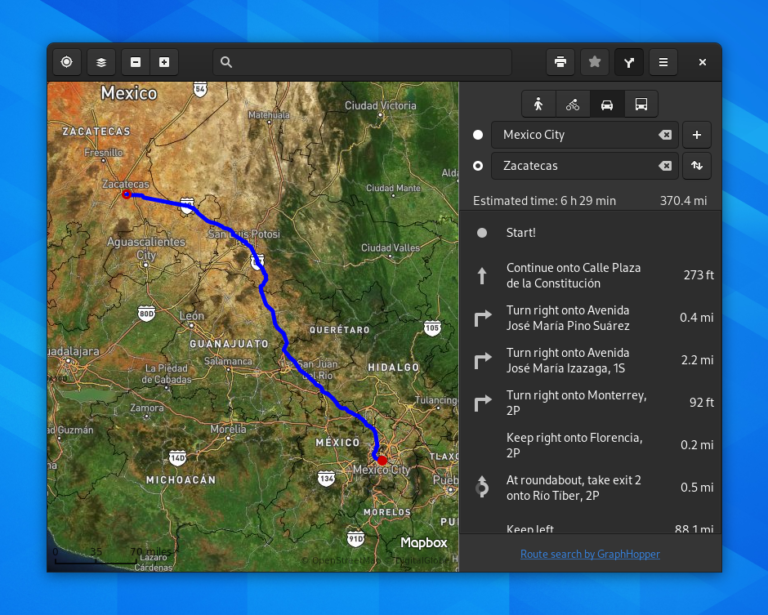

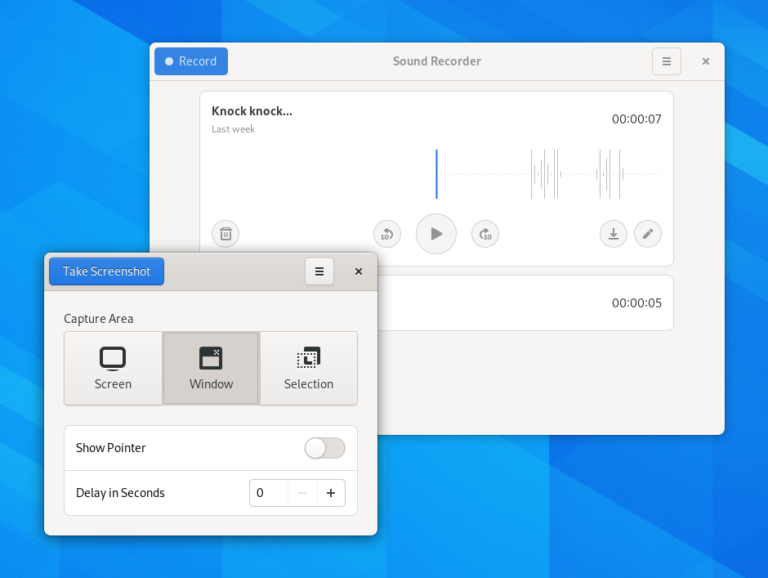

Other than the Shell itself, a number of official GNOME applications received upgrades including intelligent tracking prevention in GNOME Web (Epiphany), support for importing passwords and bookmarks from Google Chrome as well as a redesigned preference dialog in GNOME Web, labels were added to satellite view in GNOME Maps, the ability to add “world clocks” was added to GNOME Clocks, performance improvements and redesign of GNOME Games, a complete redesign of Screenshot and Sound Recorder, new icons for several applications, an updated and improved color scheme for GNOME Terminal, the new Tracker 3, and performance and usability improvements in Fractal.

There is one other change that comes with the release of GNOME 3.38 and this one is likely to be controversial for some who aren’t particularly fond of change. The GNOME team has decided to change the versioning scheme to semantic versioning by using alpha, beta, and rc (release candidate) for the different stages of development that would normally be odd-numbered versioning (like 3.37.90, for example). In addition, the actual version numbers are going to switch from from single digit to double digits with the next stable release. So, for example, GNOME 3.38.X will be upgraded to GNOME 40.0 (instead of 3.40) when the normal six month release cycle has finished. From there, it will become GNOME 40.1, 40.2, 40.3, etc. for point releases. And after GNOME 40.X, the version number will move to 41.0 for the next major release and on and on with the development cycle using 42.alpha, 42.beta, and 42.rc.

I don’t have a whole lot to say about the change in versioning as I’m not a GNOME developer or distribution packager, so it really doesn’t effect me very much, To get a much better full description of GNOME’s new versioning scheme, see the official forum post detailing it here.

Obviously, a ton of work and effort was put in to the new version of GNOME 3 and I would like to give a huge congratulations as well as a thank you to the entire GNOME Team. The GNOME Project has grown to a massive size and it is awesome seeing the project continue to dial in on its unique design and usability goals all while continuing to be the most popular desktop environment for Linux. There are quite a few more additions in 3.38–so make sure to check out the release notes linked below!

If you would like to read the official release notes from GNOME, you can find them here. In addition, if you would like to try out version 3.38 through the new GNOME OS image, you can find that here (be warned–it only works in GNOME Boxes right now). Also, the very creative promotional video for GNOME 3.38 is linked below:

Deepin 20 Reveals an Intriguing New Design

Whenever a discussion arises about the most aesthetically pleasing desktop for Linux, the Deepin Desktop Environment (DDE) is almost always at the head of the pack. The amount of detail and polish that the folks over at the Chinese-based Deepin Technology supply to the Qt-based “face” of their operation cannot be denied–with an air of macOS, GNOME 3, and KDE‘s Plasma–DDE has a little something for everyone.

However, another intriguing aspect that I’ve come to notice is that many in the Linux community only know Deepin as a desktop environment, instead of a standalone Debian-based distribution. This may be due to the fact that the desktop environment is the biggest highlight found in Deepin and became well-known through other distributions that support DDE-specific flavors like Manjaro, Endeavour OS, and, more recently, Ubuntu via the new UbuntuDDE Remix project.

Another aspect of the Deepin distribution is that of the custom applications written for it, in a similar vein to elementary OS‘ work on its AppCenter applications. Deepin provides many custom applications, written specifically to work and look native to the distribution, to its users–from a full-blown music player to its own font installer–the distribution has you covered.

Of course, since it is Debian-based, there is additional access to software through the APT repositories as well as the universal app format, AppImage. It appears that Flatpak and Snaps are not available out of the box, but can definitely be easily enabled just as with most other Debian-based distributions.

Back in April, I wrote about the beta release of the newest version of Deepin, Deepin 20, which included a significant amount of rework over the five years since Deepin 15 was released. Well, after several months of intense testing and continued development effort, along with the unavoidable effect of the worldwide pandemic, I’m excited to announce the completion and launch of the first official version of Deepin 20 this past week!

With Deepin 20 comes a whole slew of improvements over its predecessor, but the most noticeable changes are featured front and center in DDE via a massive and comprehensive rewrite of the fantastic looking desktop environment. The primary objective behind rewriting DDE included creating a unified design style for the desktop elements and homegrown application ecosystem as well as making the desktop more intuitive and easier to use for those who are new to the Linux desktop. The rewrite also brought with it many performance improvements, the inclusion of some more advanced configuration features, as well as an entirely new dock/application launching system.

Now, I would be remiss not to mention the likeness to another operating system with a major UI overhaul–mac OS Big Sur. It really is uncanny just how much the two systems look (and act) like each other, but that’s a discussion for another day!

One area that Deepin appears to be focusing on is the ever-growing importance of desktop notifications (and what the heck to do with them). Deepin has resolved this issue by building a notifications menu that pops out from the right side of the desktop–similar to the Raven menu in Budgie–that will hold all notification information. In addition, fine-grained control is given to the user through the system settings on deciding which notifications are allowed to make a sound, show up in the new panel, and even appear on the desktop’s lock screen.

In addition, applications are now allowed to be assigned to different “reminding levels”, which means you can set each application to show every notification available, no notifications at all, and somewhere in the middle of the two extremes.

Another major talking point for Deepin 20 is the ability to have a dual kernel installation. From the official release notes:

“The system installation interface has dual-kernel options–Kernel 5.4 (LTS) and Kernel 5.7 (Stable) and their ‘Safe Graphics’ modes, bringing more options for system installation, and improving overall stability and compatibility, as the latest kernel supports more hardware devices.“

This is definitely a major plus in my opinion as better hardware compatibility is a huge draw to systems that use newer kernels, which usually amounts to rolling or semi-rolling release distributions. We have seen a few Debian-based distributions, like Ubuntu and MX Linux attempt to create hardware enablement stacks, allowing users with newer hardware to reap the benefits of a much updated kernel. Having the options of the LTS or Stable kernel series is a huge benefit!

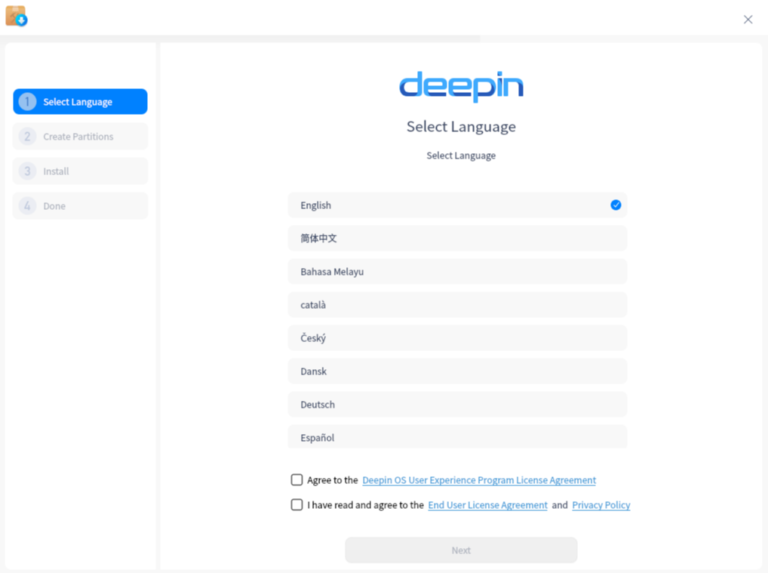

In addition to the major UI makeover that Deepin received with version 20, the installer also sports quite a bit of shine as well. In fact, the new installer might just be the easiest, most intuitive, and best looking installer out there for Linux, period. While trying the distribution out in a VM, I’m not sure I’ve ever gone quite as fast from introductory screen to rebooting in such a short amount of time. Of course, this is due to the fact that Deepin has moved certain parts of the installation process, like setting up a user account and password, to be done upon first boot of a freshly installed system. Still, it definitely seems a nice touch and the installer itself is an amazing looking piece of software.

Another area that the team behind Deepin worked on for the new release is one that is becoming more important day by day–fingerprint reading. Due to the massive improvements we’ve seen with Linux’s fingerprint drivers, Deepin has made sure to include these advancements for those who wish to access their computer through a fingerprint system. A new fingerprint can be scanned and added to a user account through the system settings application under Accounts.

Though the above areas were chosen as the major highlights of Deepin 20, there were a massive amount of bug fixes, system and software upgrades, and other new features including an updated icon theme, several brand new Deepin-specific applications (Device Manager, Font Manager, Draw, Log Viewer, Voice Notes, Screen Capture, User Feedback), an application update feature added to the App Store, the addition of proprietary NVIDIA driver blobs, improved support for wireless cards and Bluetooth, better scaling with HiDPI screens, improved wallpaper slideshow capabilities, system wakeup fixes, and the ability for full-disk encryption in the installer.

All in all, I have to say that I’m pretty impressed with the work that the Deepin developers have put into the new release. It definitely retains the title as one of the most polished and best out-of-the-box looking distributions and it is clear that a ton of work went into making the system design as consistent as possible. I can’t speak much to the usability quite yet, I wasn’t a huge fan of Deepin 15 and have only started playing around with the new system in a VM this past week. However, so far so good. The only issue I’ve been experiencing is slow download and update times, which is likely due to the placement of mirrors on the opposite side of the globe.

I’d like to give a huge congratulations to the Deepin team for an excellent job on their new release. It is really exciting to see a Linux distribution with the kind of polished out-of-the-box experience that people come to expect from the proprietary operating systems. It is obvious that the developers have put in a ton of work to make the experience as pleasant, intuitive, and consistent as possible, which is a major plus for the Linux community as a whole. I definitely look forward to playing around with Deepin 20 much more in the future!

If you would like to read the official release notes from Deepin, you can find them here. In addition, if you would like to test out Deepin 20 for yourself, you can find the ISO image download here. If you would like to keep up to date with the news surrounding Deepin, you can follow them on Twitter, Facebook, their dedicated forum, and their official news blog.

NVIDIA to Purchase ARM in Unprecedented Acquisition

Well, it appears the rumors were true. NVIDIA has purchased Arm Holdings Inc., the company behind the now-popular ARM Reduced Instruction Set (RISC) CPU architecture, in an unprecedented 40 billion dollar acquisition deal officially announced on Monday, September 14, 2020. Though not a massive surprise since rumors had been circulating about the possibility of an acquisition as early as March of this year, this move will certainly shake up the tech world in a multitude of different ways–some can be ascertained, others are yet to be seen.

Of course, NVIDIA describes the main motivation for the purchase as a move towards even greater artificial intelligence computing dominance, but we will likely see many other effects resulting from the deal. Jensen Huang, Founder and CEO of NVIDIA, had these official words to say of the acquisition:

“AI is the most powerful technology force of our time and has launched a new era of computing. In the years ahead, trillions of computers running AI will create a new internet-of-things that is thousands of times larger than today’s internet-of-people. Our combination will create a company fabulously positioned for the age of AI.

Simon Segars and his team at Arm have built an extraordinary company that is contributing to nearly every technology market in the world. Uniting NVIDIA’s AI computing capabilities with the vast ecosystem of Arm’s CPUs, we can advance computing from the cloud, smartphones, PCs, self-driving cars and robotics, to edge IoT, and expand AI computing to every corner of the globe.

This combination has tremendous benefits for both companies, our customers, and the industry. For Arm’s ecosystem, the combination will turbocharge Arm’s R&D capacity and expand its IP portfolio with NVIDIA’s world-leading GPU and AI technology.“

Though there is no doubt that NVIDIA is one of the leading companies when it comes to the field of AI and the purchase of Arm will certainly boost that, it is the other consequences of this deal that many in the Linux community are weary of.

The issue comes with the lack of commitment from NVIDIA when it comes to the open-source community. Unlike their major competition, Intel and AMD, NVIDIA does not provide the source code for their device drivers to be utilized in projects like the Linux kernel. Instead, NVIDIA ships what are known as “binary blobs”, or the executable binaries of their programs, which are closed source and proprietary. Because of this decision, Linux kernel developers have to spend an inordinate amount of time attempting to get them to work properly. Just plug in “Linus Torvalds NVIDIA” into your favorite search engine and you can very easily find out how Linus feels about the treatment from NVIDIA.

With the ARM architecture on the rise, causing companies like Apple to begin shifting their product line to ARM as well as its prolific use in IoT devices, the ability for the Linux kernel to continue supporting different ARM architectures is becoming increasingly important. In fact, even the supercomputing industry (one that almost exclusively uses Linux) is jumping to implement ARM, with Fujitsu having built the fastest computer in the world (almost double the previous record holder) earlier this year using the reduced instruction set architecture on its compute blades.

Though many people are worried that NVIDIA could do some seriously sinister stuff with ARM, like raise prices on commercial licenses, I am not sure that something like that would exactly benefit their new purchase. In fact, I think that NVIDIA’s best move is to throw money for research, development, and increased engineering power at ARM, but leave the company’s model alone for the most part (besides the obvious compatibility stuff the company will undoubtedly explore and implement).

There is always the chance that NVIDIA will mess this up, but I am not one who thinks that the sky is falling. I’m curious to what others think about this acquisition and what it could mean for Linux and open-source in the future. Go ahead and let me know your position on this controversial topic in the comments below!

So, I ask one more time…is it finally time to start pushing RISC-V? Please?

If you would like to check out the official announcement from NVIDIA, you can find it linked here.

Red Hat Launches New Marketplace & Teases Format for Their Annual Summit in 2021

To many people in the technology field, Red Hat is synonymous with Linux and open-source technology. There’s a good reason for that–they’re the largest enterprise Linux company in the world and they don’t just talk about the benefits of open-source–they embody the philosophy…to a T. In fact, it could be said that Red Hat owns no Intellectual Property (IP) because all of their software is developed in the public eye as open-source software, relying on a support contract-based model for financial profit.

After a massive acquisition by IBM last year, many predicted that Red Hat would focus even more heavily on cloud-based and containerized products, so it wasn’t a massive surprise when they announced the unveiling of the Red Hat Marketplace earlier this month. What is a surprise is just how handy and well-designed this tool is even as an initial release product. From Red Hat’s official announcement:

“Red Hat Marketplace is a one-stop-shop to find, try, buy, deploy, and manage enterprise applications across an organization’s entire hybrid IT infrastructure, including on-premises and multicloud environments.

Red Hat Marketplace Select, a private, personalized marketplace experience, is also available for enterprises that want greater control and governance with curated, pre-approved software for more efficiency and scale.“

The marketplace has a wide variety of certified software for any cloud running Red Hat’s OpenShift container platform from several different essential software categories including artificial intelligence and machine learning, developer tools, networking, security, application runtime, integration and delivery, big data, logging and tracing, storage, databases, monitoring, and streaming/messaging platforms.

I expect that we will continue to see more offerings from IBM and Red Hat to ease the barrier to entry for containerization efforts, like OpenShift, as they maintain their role as a leader and innovator in the burgeoning space. I want to congratulate Red Hat for the awesome work on such a powerful and easy to use tool. Red Hat certainly knows how to make developer’s lives easier!

In other news coming out of the Linux-focused company, it was recently announced that their annual Red Hat Summit event will take on a new format starting in 2021. In the wake of the COVID-19 pandemic, many companies have had to rethink and re-engineer their efforts in order to keep their employees and customers safe in a time of uncertainty. Consequently, many conferences, a place for like-minded people to gather, discuss, and listen to the top experts in the field speak about exciting new advances in that particular field were forced to cancel their activities or pivot to an online format.

The popular Red Hat Summit was no different. The decision was made early by Red Hat to take their annual gathering online for the benefit of attendees, speakers, and employees alike. However, this dramatic shift in conference technology has sparked some at Red Hat to look at the current format of the Summit and envision how it could be improved in the future. From their official announcement post:

“We could take the same approach with Red Hat Summit next year [2021], it would certainly be the safer and simpler option. But that’s not Red Hat. Instead of repeating what we’ve already done we’ve decided this is a good time to explore new worlds and build on our successes while also trying new things.

We’re pleased to announce that Red Hat Summit 2021 will be a three-part experience that includes two virtual components in the spring and summer and a series of in-person events later in the year!

What energizes us most about this new hybrid approach to Red Hat Summit is that it will enable us to be more inclusive and engaging throughout the year. Bringing more customers, partners, technology industry leaders, and open source enthusiasts from around the world together means we can provide more opportunities for innovation, collaboration, and learning.“

Though the possibility of a continued lock down state due to COVID-19 is certainly on the table for 2021, it’s inspiring to see the enthusiasm from Red Hat in expanding their once a year event into multiple meetings throughout the year, allowing many more to participate than ever before in the open source leading initiatives by Red Hat and their partners.

So, what will Red Hat’s event timeline look like next year? Currently, the new initiative is still in a constant state of flux, though some details have been nailed down as of the time of this writing. It all starts on April 27-28, where Red Hat will host a virtual event that details the most compelling news and announcements coming out of the company (and open source community beyond), which will include Q&A sessions, titled “Ask the Red Hat Expert”, in order to get direct access to engage with some of the leading engineers and developers from the company.

A couple months later (June 15-16), another virtual event will be held…and this one is presented as a technical deep-dive into Red Hat’s major software solutions and platforms! The virtual conference will have different tracks devoted to a variety of areas in the software stack including cloud computing, containerization efforts, Kubernetes orchestration, and advances in the Linux kernel and greater ecosystem. Like the previous event, this will hold virtual “booths” in order for attendees to engage with the leading experts from Red Hat in the technical track of their choice.

The year rounds out with the main event taking place sometime in Autumn 2021. This will include several in-person, small-scale events where attendees can get the physical experience of the pre-COVID conference by networking, participating in hands-on, interactive sessions including labs, demos, and training as well as meeting other Red Hat users or open source enthusiasts. In addition, these events will give attendees the chance to speak one-on-one with the experts at Red Hat to discuss possible uses of the latest, cutting-edge offerings for their business or personal lives.

This is really exciting stuff coming out of Red Hat. Though the Red Hat Summit this year was extremely smooth and well-thought out, it is nice to see a hybrid approach being taken in the future in order to reach as much of their audience as possible. I want to congratulate Red Hat for making a progressive move that will benefit everyone in the community greatly going forward. So, will I see YOU at any of the Red Hat events next year?

If you would like to learn more about the Red Hat Marketplace, you can find additional information here. If you would like to read the official announcement post from Red Hat regarding the 2021 Summit format change, you can find it here. In addition, if you would like to check out some of the incredible presentations from this year’s Summit, you can find them recorded here.

Zorin OS 15.3 Has Been Released

Today, there are many distributions out there that aim to make desktop Linux adoption as easy as possible, especially for new users coming from the proprietary solutions like Windows 10 and macOS. On the front lines of this battle are the wonderful developers over at Zorin OS, who have taken an Ubuntu LTS base and molded it into one of the cleanest, easiest to use, and professional looking distributions for new users and master sudoers alike with a team focused on pushing the innovation of desktop Linux to new heights–just check out their awesome initiative to make syncing Zorin OS for a multi-user system easier than ever with the custom Grid Tool.

The design of Zorin OS can match anything in the proprietary world and they don’t just stop at the distribution itself–that same polish and professionalism is carried over to their extremely attractive and modern website as well–which makes Zorin OS a very appealing face for desktop Linux as a whole.

Earlier this month, the Zorin team made an announcement about the latest release in the current 15 series, 15.3. It has been quite a while since we’ve covered Zorin OS news, which likely means the developers are hard at work improving parts of the operating system that will become live when version 16 hits. For now, though, Zorin OS 15.3 certainly gains a ton of updates to software and system as it moves to using Ubuntu 20.04 as a base system.

In the release notes, the Zorin team notes the successes that they have seen–even with being a younger distribution than most. Indeed, Zorin OS 15 has been downloaded over 1.7 million times and over 65% of those downloads were from a machine running Windows or macOS! This definitely proves that the team is certainly doing well in their mission to bring Linux to everyone, especially those who are trying it for the first time.

Of course, with the new re-base on Ubuntu 20.04, Zorin 15.3 now ships with a much updated Linux kernel 5.4, which allows for greater performance, stability, and security along with support for a much wider range of hardware. In addition to an upgraded kernel and system level components, many applications in Zorin 15.3 have seen updates, with LibreOffice serving as the poster-child in their release notes.

Zorin 15.3 ships with LibreOffice 6.4.6 by default which includes a number of improvements like better compatibility with Microsoft Office documents, additional document editing features, more support for commenting in documents for increased collaboration, and many performance boosts. In addition to LibreOffice, a number of other applications have seen upgrades including many of Zorin’s custom and core applications.

Moreover, the Zorin Connect application for Android has been updated with improvements such as:

- Only auto search for devices on Trusted Wi-Fi networks

- Quick buttons to send files and send clipboard in the persistent notification

- Full support for the latest versions of Android

- Enhancements for stability and performace

Because this is a minor point release, you will not notice any major changes to the distribution’s UI elements or layout. This release is mostly a nice refresh to bring the system up-to-date for best performance, security, and stability. I want to congratulate the Zorin OS team for hitting some very impressive milestones along the journey of Zorin 15 development. I often feel that sometimes Zorin can be overlooked by community members when recommending distributions to new users like Linux Mint, elementary OS, or Ubuntu and I think that is quite a shame. Zorin OS is truly one of the most polished, professional, and usable Linux distributions out there today.

I know that I’m certainly looking forward to Zorin’s future–I imagine that the developers will really bring the distribution to the next level with some of the features they are building into Zorin 16 and can’t wait to check it out. For now, though, I’m okay with a respectable update to the OS with minimal changes.

If you would like to check out the Zorin OS 15.3 release notes, you can find them here. In addition, if you would like to try Zorin OS out for yourself, you can find the download images here.

Endeavour OS Releases First ARM Images

As more and more devices become built on ARM technology, there has been a dramatic rise in Linux distributions starting to support the technology for single-board computers, mobile, and desktop computers. Of course, Linux as a whole has been leading the charge into the reduced instruction set (RISC) world, of which ARM is a particularly popular architecture. This has caused many popular desktop Linux distributions to spin off specific images for ARM devices instead of the usual x86_64 chips that dominate the desktop computing world.

This week, another distribution joined the ranks of those progressive distributions and it’s one of my personal favorites–Endeavour OS. You might know the project as a rebirth of the once-popular Antergos distribution, who unfortunately shut the doors on their Arch-based operating system last year. The removal of Antergos sparked many loyal users to continue on with a similar ethos and built the foundation of Endeavour OS.

Endeavour OS takes a very unique approach to the goals of their distribution compared to many popular distributions out there. Instead of attempting to make the distribution simple and user-friendly like many Debian and Ubuntu-based distributions have (and even the extremely popular Manjaro), Endeavour encourages users to utilize the distribution as a learning ground to break into the more advanced areas of the GNU/Linux operating system. The main focus of Endeavour is community–when you get stuck with something in the distribution, there is an army waiting to help out in the official forums!

For instance, instead of shying away from having users in the terminal by abstracting its use away in GUI helper programs, Endeavour encourages the use of the fundamental tool as a way of becoming more familiar with the nuts and bolts of the operating system. This is partially because Endeavour is an Arch-based distribution that attempts to stay as close to pure Arch Linux as possible, while providing a much easier to use graphical installer. So, Endeavour is in the unique position of providing an easy install process to get the system up-and-running, while also leaving much of the actual configuration up to the user in the Arch way.

Now, Arch Linux on ARM devices is not a new idea by any means. In fact, there actually exists a specific project, Arch Linux ARM, to bring the popular minimalist distribution to the rapidly growing architecture. In addition, Manjaro, the most popular Arch-based distribution today has an impressive portfolio of support on many ARM processors, especially those utilized by the Raspberry Pi series of devices as well as the groundbreaking PINE64 suite of devices. This has spun off into specific Manjaro images for the PineBook Pro (and is the default that ships with it), the PineTab, and the PinePhone, where Manjaro support multiple different environments including UBports‘ Lomiri, KDE‘s Plasma Mobile, and Purism‘s Phosh.

From the official announcement post by the Endeavour team:

“Theoretically, Endeavour OS ARM can run on any ARM devices, but we recommend an ARM device with the following specs:

- The device must be supported by Arch Linux ARM

- A Quad CPU with 1.5 GHz and up

- At least 2GB of RAM

- Two USB 3.0 ports for external drives and possibly additional USB 2 ports for keyboard, mouse, etc.

- A 1 Gbit ethernet connector

Other than the first specification, having less than the above specifications do not rule out an install wouldn’t work. A 10/100 Mbit ethernet or only USB 2 ports would only mean that performance could be affected on certain operations.“

As of this writing, there exists manuals for several pieces of hardware including the Pinebook Pro, the RockPro64, as well as other PINE64 devices. With these ARM images comes a slightly different install process than what you would expect with the x86_64 Endeavour OS image. There are two distinct phases of the install including installing the Arch Linux ARM base and running another script which guides through the Endeavour OS install as a desktop or headless server. As with the X86_64 version of Endeavour OS, the ARM installation also gives a choice of desktop environment including Xfce, GNOME 3, KDE Plasma, Cinnamon, Budgie, LXQt, MATE, and the i3 window manager.

This is an extremely exciting moment for the Endeavour team as well as their loyal user base. I know that I will be installing it on my Pinebook Pro as soon as I get some extra time. I want to give a huge thanks and congratulations to the hard working Endeavour OS team for committing to explore other avenues, like ARM, with their awesome distribution!

If you would like to learn more about the Endeavour OS ARM release (as well as their normal September update), you can find the link here. If you would like to install Endeavour OS ARM on your device, you can find a guide at their dedicated ARM page here. Moreover, you can follow along with the Endeavour team through Twitter, Mastodon, Telegram, and their official forums.

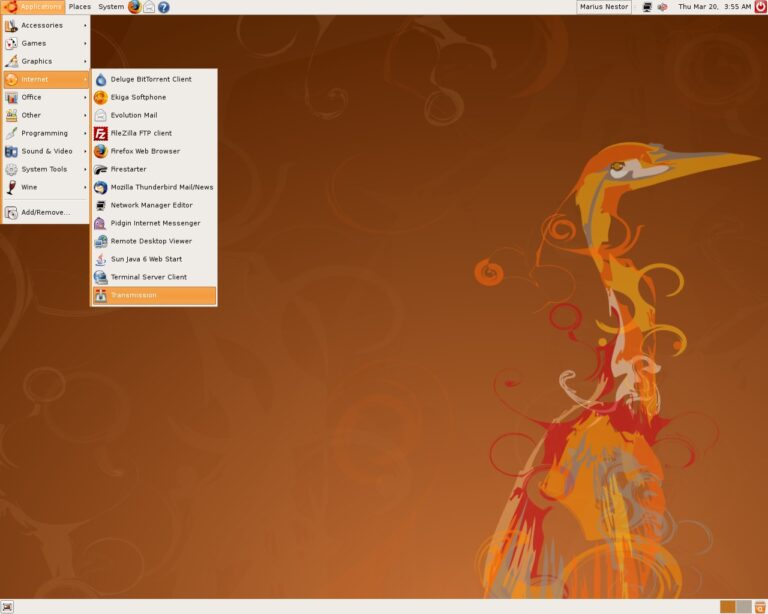

Ubuntu Community Council to be Reinstated

If you’ve been in the Linux ecosystem for long enough, you’ll have experienced the drastic evolution that Canonical, the company behind Ubuntu, has gone through over the years. From humble beginnings in 2006 to rising to the most popular Linux desktop and cloud distribution in the world, it is safe to say that Ubuntu has had a major hand in the direction Linux has taken in the modern era.

One aspect that Canonical hasn’t shied away from is exploring different solutions to problems they’ve faced. Many people like to explain this away as “not invented here syndrome”, but it really is a lot deeper than that. And even though seminal projects like Upstart, Unity, and Mir have been discontinued, pivoted, or handed off to the community, their existence has helped push Linux forward in so many ways.

However, in recent years Canonical has definitely begun focusing much more of their attention on areas that bring in profit–they are a company, after all. Consequently, it can be seen that not nearly as much focus is put on the Linux desktop compared to Ubuntu’s early years, which skyrocketed it in popularity via crafting the first distribution that was easy enough for non-technically inclined people to install and setup–a move that brought many more people to the Linux desktop than ever before.

The popularity of Ubuntu on the desktop also contributed to its massive adoption in containerization and cloud computing all around the world. In fact, if you see a tutorial for installing a piece of software on Linux, most of the time it will be a tutorial for Ubuntu (and many times only for Ubuntu). The distribution has certainly become the face of Linux for those who know or care little about the underlying operating system.

Moreover, Ubuntu’s innovation in desktop Linux and popularity has caused it to become one of the most popular distributions to use as a base or launching point for other distributions to build on top of. In fact, a multitude of beginner-friendly and extremely popular distributions have risen by being built on top of Ubuntu including Linux Mint, elementary OS, Zorin OS, Pop!_OS, KDE neon, Nitrux, Linux Lite, Peppermint OS, along with the vibrant official “flavours” like Kubuntu, Ubuntu MATE, Xubuntu, Ubuntu Budgie, and Lubuntu. In addition, in the past year, several Ubuntu “Remix” projects have risen up like Ubuntu Cinnamon Remix, UbuntuDDE Remix, and Ubuntu Unity Remix.

A problem that Canonical has faced throughout the years where it focused heavily on the desktop Linux experience is that of community backlash against the company’s vision for Ubuntu. Because the company is so tightly entwined with the community, Canonical receives an astonishing amount of criticism compared to other Linux companies like Red Hat and SUSE, whose desktop offerings, Fedora and openSUSE respectively, are not official products of the company, but are considered community-run. So, when something goes wrong on Fedora, nobody screams at Red Hat. For Ubuntu, Canonical doesn’t get that same separation.

In addition, the popularity and dominance that Ubuntu has over desktop Linux makes it the largest target for disgruntled Linux users. Many people don’t wish to see Linux become a commodity operating system that is usable by anyone and everyone and attempt to gatekeep those who choose to use Ubuntu by belittling their choice, and therefore, their skill, ideas, and even their character. This has been one of the major reasons that many people (and even companies) steer clear of desktop Linux as a whole–the small, but vocal minority can be extremely toxic and unwelcoming. And, more often than not, that toxicity is pointed toward Canonical or Ubuntu.

So, it’s pretty easy to see why Canonical hasn’t put the same amount of effort into the Ubuntu desktop as of late–would you want to put in enormous effort to something where no matter what choice you make it will result in a constant barrage of bashing by the community? Nope, and Mark Shuttleworth, the Founder and CEO of Canonical, doesn’t either. Instead the company has shifted to more profitable and less toxic communities like cloud computing and containerization. This has lead to the slight erosion between community, once the heart and soul of Ubuntu, and company.

Add in some major successes with enterprise-focused products and projects like Juju, Snapcraft, MAAS, LXD, Landscape, and OpenStack as well as major partners in the tech enterprise space like Microsoft and Google and it begins to make even more sense for Canonical to focus on those relationships and products where they won’t get the community blow-back they do from developing for the desktop and trying to build projects and tools specifically for the desktop. Sometimes it feels like the hand that feeds has been bitten too many times.

However, in a recent post by “bkerensa2” on the Ubuntu Discourse forums titled “The Future of Ubuntu Community” seems to have struck a nerve with many Canonical employees, Ubuntu contributors, and especially Shuttleworth himself. The post talks about the deteriorating relationship between Canonical and the community–especially with the fizzling out of the Ubuntu Community Council, a once helpful liaison between Canonical and the vast community that surrounds Ubuntu.

In response to the well-written post that exposed some serious concerns from those who used to be heavily invested in the community, Mark Shuttleworth wrote a lengthy post:

“I’m not absent. In fact, for the past few years I have set aside all other interests and concerns to help Ubuntu get into a position of long-term sustainability. That has been an amazingly difficult job, but I set my mind to it precisely because I care that Ubuntu as a community has a backbone which is durable. So I am in fact more present than ever, and just as concerned with our community’s interest, and just as appreciative of the great work that many people continue to lead under the banner of Ubuntu in all its forms.

I am rather frustrated at my own team, because I have long allocated headcount for a community lead at Canonical, a post which has not been filled.

It’s necessary to have a dedicated lead for this, not so much because the community needs leadership, but because its self-motivated leaders need support. Think of the role more as community secretary than community advocate, helping to get the complicated pieces lined up to empower others to be great. The project has continued to grow in complexity and capability, there are more people than ever working on it, more people than ever making demands on it, so getting things done requires patience and coordination. Helping motivated community leaders to be effective in driving their work forward is important to me.“

Shuttleworth went on to talk about the deterioration of the Ubuntu Community Council, where he watched members become detached, skip meetings, and stop organizing meetings. He posits that it was likely the nature of the work–settling disputes within the community. Today, there are well-defined guidelines on how to contribute to Canonical’s projects as well as the code-of-conduct, of which Shuttleworth has said that disputes and issues within the community are few and far between.

Therefore, the Ubuntu Community Council would need a different direction and structure if it were to be reinstated, and make no mistake, Shuttleworth would love to bring back the Ubuntu Community Council if it could be “professionalized” and “perform real, satisfying work that requires dedication and judgement, but also generates reward for those who put in the effort“.

In his final statement, Shuttleworth leaves the discussion up to the Ubuntu community to bring forth ideas on a new structure for the Ubuntu Community Council:

“By all means, continue this thread, I think its interesting to see what others might come up with in terms of how we improve community representation and coordination. I don’t thing the dispute-resolution function is sufficient to attract high quality people with dedication and focus to the work, so the question is, what will?“

And that should be that, right? Well, not really. Over the weekend following his response, Shuttleworth decided to move right away to reinstate the Ubuntu Community Council once again:

“Having considered it over the weekend, with @wxl’s offer to help run the process, let’s go ahead and call for nominations to the CC. If folks could direct those nominations to @wxl during the rest of September, I will review and put forward the shortlist as usual in the second week of October, and that means we could have a new CC in place by the middle of October.

Which would be great.

Thanks for reminding me @wxl that it’s worth having the group in place even if it isn’t particularly active, it’s a good opportunity for those who do want to drive things forward to do so. There are is a great deal of work being done, and it would be nice for people to have the CC in place to support that.

Apologies again for having dropped the ball.“

So, it looks like the Ubuntu Community Council will be reinstated after all! If you are an Ubuntu contributor or community member, or know of someone who would be an excellent fit on the Community Council, make sure to nominate before the end of September.

I’m happy that the community has brought this to attention and that Mark is moving forward to reconcile the areas that are of concern to those in the Ubuntu community. I hope that they are able to transform the Community Council into something more than dispute-resolution–an echo of the community’s voice and ideas to bring the efforts of Canonical and the Ubuntu community closer together than ever before.

If you would like to read the original post that sparked this conversation on the Ubuntu Discourse, you can find it here. If you would like to read Shuttleworth’s full response, you can find that here. If you would like to read the final decision to reinstate the Ubuntu Community Council along with information on nomination for the Community Council, you can find that here.

Community Voice: Eric the IT Guy

This week Linux++ is very excited to welcome Eric the IT Guy, Solutions Architect for Red Hat and co-host of the recently launched Sudo Show podcast as part of the Destination Linux Network. Eric is an incredible asset to the open source community, having worked as a Linux Systems Administrator for many years, he brings a unique perspective to the table that includes extensive experience with the technical, business, interpersonal, and community aspects of open source to his engaging and informative content. To summarize in Eric’s own words:

“I am known across the community as Eric the IT Guy. By day, I am a telecommunications-focused Solutions Architect for Red Hat–in other words, a sales engineer. However, my real passion, and the space I have begun to explore in the community is that of a technical evangelist and coach. I got started at LUGs (Linux User Groups) and meet-ups and realized I had a desire to share the lessons I have learned in an effort to help others find joy in their careers.

Last year, I gave talks at a number of conferences. This year, I achieved a long-time goal of launching a podcast, the Sudo Show, through the Destination Linux Network! When I am not working or podcasting, I am spending time with my family. Then, after a busy day, I like to unwind with a book or play Dungeons and Dragons with friends.”

So, without further ado, I’m happy to present my interview with Eric, the one and only, IT Guy:

How would you describe Linux to someone who is unfamiliar with it, but interested?

“Linux is everywhere. You interact with it every day whether you know it or not. Almost all the websites you visit and many of the mobile devices run a form of Linux. This is why those devices are fast, stable, and secure. If you trust Linux for your mobile device or to run your business on, why not run it on your desktop or laptop too?”

What got you hooked on the Linux operating system and why do you continue to use it?

“I got started on Linux because it was cool to tinker with. It was customizable, a third option, and off the beaten path. When I started trying to use it full-time on the desktop, it was because that brought me closer to the open source community. Linux is what the community was running–it was a bonding and collaborative experience. Now, I still enjoy those things about Linux, but I continue to use it because it is a secure and efficient tool to get my work done.”

What do you like to use Linux for? (Gaming, Development, Casual Use, Tinkering/Testing, etc.)

“I use it for everything. It’s my livelihood. The products and projects I support are all built off of Linux. It’s my daily driver at work and at home. I use it for my general desktop and most of my gaming takes place on my AMD rig!”

Do you have any preferences towards specific Linux distributions? Has your daily driver changed over time? Do you test out multiple distributions at all?

“I have never been much of a distro-hopper. The past several years, I’ve exclusively run Fedora Workstation, though I have tried out RHEL 8 (Red Hat Enterprise Linux) and Ubuntu. When I started, though, I ran Arch! On the server side, its either Fedora Server or RHEL 8.”

Do you have any preferences towards desktop environments? Do you stick with one or try out multiple different types?

“Like distros, once I found my workflow, I haven’t ever really hopped from one to another. Before coming to Linux, I was a macOS user. So, a polished desktop with a smooth workflow was a must for me. Every so often, I’ll use Plasma, but for the vast majority of everything, I go to GNOME.”

What is your absolute favorite aspect about being part of the Linux and open source community? Is there any particular area that you think the Linux community is lacking in and can improve upon?

“Honestly? The people, the relationships. I have friendships with people in the community that have lasted years and are still growing. I can look at my Matrix chat and see friends, mentors, coworkers, and colleagues. If I need help, there are groups I can go to and not feel like I am asking a stupid question, and I can usually get a quick answer too!”

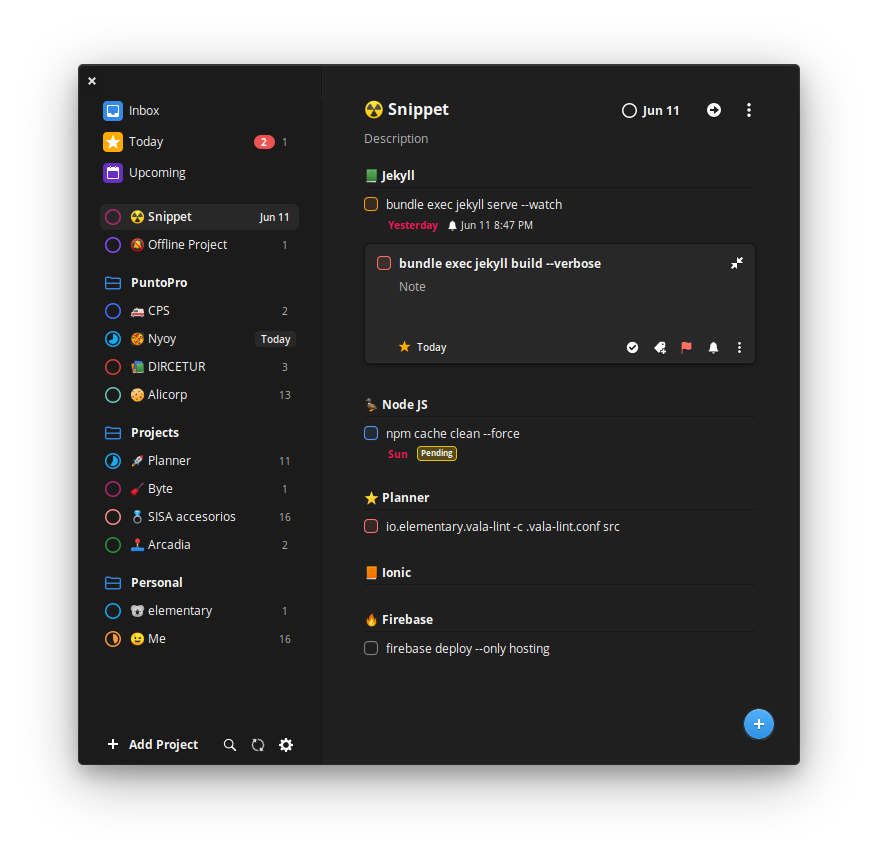

What is one FOSS project that you would like to bring to the attention of the community?

“I think one project I am really excited to keep my eye on is Planner. I have a mild addiction to Todoist, which for me is where I do ALL my project planning and task management from emails to social media schedules to building work presentations. I love everything about Todoist…except that it’s cloud-based and a proprietary subscription model. Planner is an open source alternative that has come a very long way in recent releases (even syncs with Todoist!).”

Do you think that the Linux ecosystem is too fragmented? Is fragmentation a good or bad thing in your view?

“That is a tricky question. First off, the ability to fork code is what has given us some of the most popular projects we have. Someone has an idea, they build a project, developers have divergent ideas of how to solve that specific problem, so, they fork the code, go their separate ways, and now you have two options where one or the other is likely capable of matching your workflow.

The only problem in the world of FOSS is if you have two options, there are usually twenty. Most projects have one to three developers at most. If, instead of maintaining a number of different projects to solve the same problem, developers could team up and build in features and workflows that can be customized on top of an already proven codebase. Getting there would definitely be the problem, though. There are so many great projects, there’s no way to just pick a couple.”

What do you think the future of Linux holds?

“Wearing my IT professional hat, (Red Hat, get it? Ha!), Linux is the dominant force and will be for the foreseeable future. Linux is taking over infrastructures, is dominant in cloud, and almost the only player in the IoT (Internet of Things) space.

From the community aspect, I love what I am seeing. More companies are taking an open source first approach. More companies are getting involved in community projects. Heck, companies are even starting to apply open source principles to their business processes too!

Every day, new technology enthusiasts make their way into the open source community and schools are starting to teach open source development. If you look at the biggest trends in development and technology, I would say 99% of them have roots in open source. So, if you want to know what the next big trend will be, see what the open source community is excited about and working on!”

What do you think it will take for the Linux desktop to compete for a greater share of the desktop market space with the proprietary alternatives?

“The concept of a traditional desktop is very much in flux right now. Tablets are getting faster and come packed with more features than ever. Smart phones are everywhere. The web browser and netbooks are replacing many laptops and desktops for families’ day-to-day computing needs. I think the need to win the traditional desktop is aging out.

If Linux wants to compete in the general consumer market, we need to work with our desktop environments and all our projects to develop better touch and mobile focused UIs (User Interface). Companies like PINE64 are already fighting that battle and are contributing heavily to this future. I won’t make some grand prediction, but, for me specifically, if a Linux tablet comes out that can provide a form of convergence, that could compete (eventually) with the likes of the iPad Pro, I’d be all over it!”

How did you get into podcasting coming from a sysadmin background?

“Great question. I actually got into podcasting because of my sysadmin background. After spending a decade in operations, you learn a thing or two–you have a few stories to tell. A couple of years ago, I started to get more involved in the community and had friends and mentors show me that my passion and energy weren’t being fully utilized spending all my time on the command line.

I wasn’t getting fulfillment out of my work. So, I moved out of operations and into sales. Now, instead of installing and managing products, I talk to teams every day about how different products can bring about a completely new way of getting work done. I work with systems, network, and security administrators and developers on the importance of automation, the benefits of a hybrid-infrastructure, and how implementing DevOps principles can bring about more efficiency and job satisfaction.”

How has open-source software evolved throughout your career in technology?

“When I started in IT, open source was just starting to find its roots. In fact, when I first started, git hadn’t even begun to be widely adopted! Since then, I have watched it grow and gain adoption. I even gambled my entire career on Linux and open source a few years into my career by deciding to pursue an RHCSA and leave my stable job as a Windows Server Administrator to work at a different company as a full-tme Linux Systems Administrator. I am so glad that gamble paid off, haha!”

How did the idea of the Sudo Show come to life? Has the idea changed over time?

“If you look around at the content being generated, there are plenty of business-focused podcasts and there of plenty of community-focused podcasts. There really aren’t that many that look at the business AND open source! That’s where the Sudo Show came into being. It focuses very heavily on enterprise open source and tackles topics about the community and careers. It combines Brandon (my co-host) and my experiences as IT professionals and as open source advocates into one show. It brings together lots of different aspects of work and the industry–from DevOps, open source, cloud computing, and even some productivity tips and tricks.

The funny thing is the Sudo Show has gone through a number of different iterations before it actually went live. I have worked to launch a podcast for the past couple of years! It started out as the IT Guy Podcast. I got as far as recording a pilot episode. I watched it again not too long ago and it was painful to watch! It was twenty minutes of me rambling on about what Linux is.

The show got a rebrand to be Sudo Radio a year or so ago, but never found its voice or a place to launch. Finally, starting at SELF (South East Linux Conference) 2019 and into this year, I worked with Michael Tunnell from TuxDigital and found a home with the Destination Linux Network. I also found a co-host in Brandon Johnson, a friend, mentor, and now coworker at Red Hat. The show got a new name and new logo and we launched.

The one thing that hasn’t changed in all this time is the heart of the show. Since I bought my first microphone, my dream has been to inform and enable others to maintain a good work-life balance, keep learning, and influence their teams and businesses to find better ways of doing work.”

Can you explain what makes a piece of software “enterprise open-source”? How does an open-source project go from being a hobby/side project to one trusted by enterprise customers?

“It’s easier to explain through an example. Let’s take RHEL and Fedora. At Red Hat (and other companies with a similar model), there are projects and there are products. Fedora is an open source project. Fedora is my favorite Linux distro on the server and on the desktop. It has a large community that supports it and even ‘spins’ that cover different use cases (like Plasma or IoT). It’s free, its community-driven–it’s a project.

Red Hat Enterprise Linux, on the other hand, is a product. RHEL is based on Fedora. Red Hat stands as a bridge between its paying customers and the open source community. Red Hat helps support Fedora with resources and expertise. However, the BIG differentiator between forking an open source project and selling it versus enterprise open source is that Red Hat (and its customers by proxy) commit features and bug fixes upstream first! So, anytime a new feature is released in RHEL, it was first built and tested in Fedora! A great example of this is how Fedora dropped Docker for Podman. When RHEL 8 was released, Podman was the supported containerization engine.

Customers of an enterprise open source company get an advocate to the open source community and the guarantee of company support and security while the open source community benefits from all the bugs and use cases found by the paying customer! This is true of RHEL and Fedora, Satellite and Forman, OpenShift and OKD, and a dozen others.”

What is your favorite part about working for an open-source first company like Red Hat?

“Red Hat was a huge part of my career long before I worked there. I worked almost exclusively on RHEL servers for most of my time as a sysadmin. I love their products, but what I loved even more was their position within the community. They give back. Red Hat is one of the leading contributors to the Linux kernel, Kubernetes, and dozens upon dozens of open source projects and libraries across the entire ecosystem.

However, my absolute favorite part of my job at Red Hat has to be the encouragement to give back on an individual level. I am encouraged, in addition to my duties as a Solutions Architect, to do something outside my role, whether its developing code or, in my case, creating content.”

In your perspective, how does Red Hat operate in a way that allows them to keep their entire product line open source? How active is Red Hat in the greater open source ecosystem and the community in general?

“Ha! Red Hat’s secret sauce is…they have no intellectual property. The product line can remain open source because no development takes place within the products themselves. Everything goes upstream first. Instead, Red Hat provides training, customer support, and consulting to generate revenue. As long as Red Hat stays true to that mission, there will always be people and companies who will pay for the support, advocacy, and relationships I described earlier.”

Do you have any major personal goals that you would love to achieve in the near future?

“My big goal right now is to make sense of my place in all of this. I have been a sysadmin for a long time and now a Sales Engineer. My personality is that of an Advocator (for you Clifton Strengths nerds out there). I am great at generating energy and getting projects off the ground. I want my energy to be contagious. I want others to fall in love with technology and their careers. My hope is others can learn from my mistakes and build on the lessons I have learned. For me, my biggest challenge is where is that? Is that as a coach? Consultant? Podcaster? All I know is that I am in the right place to figure that out as a member of our awesome open source community.”

I just want to wholeheartedly say thank you to Eric for taking time out of his extremely busy schedule to prepare an interview with Linux++. Eric’s passion for Linux and open-source software is permeable and contagious with every interaction. He is an extremely helpful member of the community who loves to share his knowledge of technology and is always willing to help. Beware, what seemed like it might be a short conversation with Eric can often turn into hours of deep discussion about technology, philosophy, and a number of other engaging topics. Thanks again for the wonderful interview, Eric, and I can’t wait to see what lies in store for the Sudo Show!

If you would like to keep up with the latest news from Eric, you can find him on Twitter, Mastodon, YouTube, Facebook, GitLab, the Linux Delta Matrix server, or his personal website. If you would like to check out the Sudo Show, you can find out more on Twitter or the official website.

Exploring the Linux System

This week on Linux++, we’re going to follow up with our dive into file systems in the last issue with a look at another very important component of Linux–the windowing system. This component is what controls graphical environments–like your favorite desktop environment or window manager–to hook into an agreed upon protocol to implement their functionalities. Though there have been many ideas for newer display server protocols, it has become practically unanimous among the different Linux developers that Wayland is the way forward.

Let’s take a quick look at the history of windowing systems on Linux, why Wayland is an important milestone, and what the future may look like for the new(er) Linux windowing system.

Wayland History

In 1992, one of the most important events in Linux history took place–the porting of the X Windowing System (X11) to the Linux kernel by Orest Zborowski, which allowed graphical user interface (GUI) support for the very first time in the young, open source operating system kernel. However, X11 was not a new piece of software by any means at that time, in fact, it had already enjoyed nearly 8 years of development from it’s initial conception at the Massachusetts Institute of Technology for a replacement to the W Windowing System, which was popular for Unix operating systems of the time.

Over the formative years of kernel development, X11 became integral to the Linux experience and, in 2004, the X.Org Foundation was created to ensure the continued development of X11. However, as Linux and its software ecosystem began to rapidly evolve, along with the exponential growth of differing chipsets and device forms (like Tablets and Smartphones), it was starting to become obvious that something was needed to replace the legacy architecture of X11 in order to keep Linux working across multiple devices and architectures. It wouldn’t take long before a formidable replacement would make its way into the greater Linux developer consciousness.

In 2008, a Red Hat employee and X.Org developer, Kristian Høgsberg, began working on a side project in his spare time. The idea included a very ambitious goal: create a new windowing system where “every frame is perfect, by which applications will be able to control the rendering enough that we’ll never see tearing, lag, redrawing or flicker.“. While traveling through the town of Wayland, Massachusetts, the architecture and implementation for Høgsberg’s project was finalized in the developer’s mind. With the major eureka moment, the project would take on the name of that small Massachusetts town, Wayland–in honor of Høgsberg’s breakthrough there.

By October 2010, Wayland was accepted as a supported project by the freedesktop.org initiative. In response, many in the Linux developer community declared Wayland as the future of Linux display and the successor to X11. A collection of the X.org developers began working on Wayland as well as a compatibility library, XWayland, in order to smooth the eventual transition.

By 2013, almost everyone had declared their support of Wayland. However, Canonical, the creators of the most popular desktop Linux offering in the world, Ubuntu, were not satisfied with the progress that had been made on the Wayland display server as well as the current functionality available. With an upcoming push towards mobile convergence with the new Ubuntu Touch mobile operating system and Ubuntu Edge mobile phone, Canonical chose to announce a different project, Mir, that directly competed with Wayland, in order to move up the timeline for Ubuntu Touch (and its Unity8 interface).

Of course, this decision was extremely controversial in the open source community and many complained that Canonical was splitting off valuable engineering resources from Wayland. Both projects were worked on for five years until Canonical announced the discontinuation of their convergence initiatives as well as the Unity desktop environment, which would be replaced by GNOME 3 in the 18.04 LTS release. Because Wayland and Mir had diverged considerably over the years, Canonical announced that development of Mir as a Wayland competitor would be halted. Instead, the code was open-sourced and the Mir developers chose to pivot the project into a Wayland compositor. Today, all sights are set on Wayland once more–allowing for a united development front to the project.

The Wayland Architecture

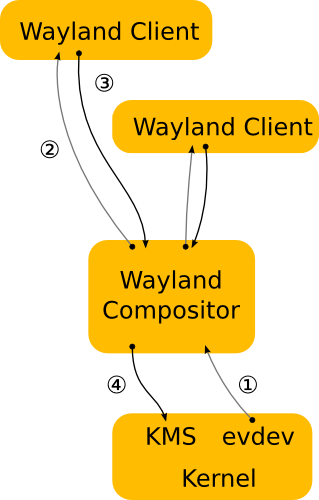

It is important to remember that Wayland is simply a communication protocol that allows a display server to talk to its clients. The Wayland protocol is implemented as a C library for easy interaction with the Linux kernel and its different components. In the Wayland model, a display server uses the Wayland protocol to interact with clients and, in doing so, becomes a Wayland compositor. The Wayland compositor is what does a lot of the heavy lifting like merging the window buffers into an image representing the screen as well as blending, fading, scaling, rotating, or duplicating the applications present on said screen.

Since Wayland is simply the protocol, compositors must be built using the Wayland protocol–a job that has been taken on by downstream Linux desktop environments. The Wayland developers have put out a reference implementation, known as Weston, to try to ease the burden as much as possible. However, Weston is just that–a reference implementation that should NOT be used in production.

The Wayland implementation consists of a much simpler architecture. Unlike the X11’s structure, a Wayland compositor is the display server. The control of KMS and evdev are transferred directly from the kernel to the compositor. Therefore, the Wayland protocol allows the compositor to send input events directly to the clients and lets the client send any damage events directly back to the compositor.

This allows the system to bypass many of the procedures that are required for X11 communication between the X server and X compositor, removing latency at each level of the transactions. Of course, because Wayland isn’t considered “prime time” just yet, a migration path has been made between X11 and Wayland, aptly named XWayland, which is especially good for those that rely on backwards compatibility. This architecture adds back in the X server, which connects to the Wayland compositor and serves X clients that do not speak the protocol.

The Way Forward with Wayland

Though work on Wayland has been ongoing for nearly a decade, it is still unable to be used as the standalone display server without XWayland in the mix. Therefore, adoption of Wayland by many distributions has been few and far between. Moreover, because of NVIDIA’s refusal to open-source their drivers and focus on Wayland, many people would simply be unable to use the display server if they have an NVIDIA graphics card. Considering that this is quite a large portion of the overall PC market, making Wayland (or even XWayland) the default is not recommended.

However, several desktop environments have been hard at work building their own compositors for the Wayland protocol, with GNOME and KDE leading the pack. Today, it seems that every major release brings both one (or even multiple) steps closer to being able to use Wayland by default. Due to this increased support and compatibility, GNOME allows for a Wayland session to be chosen during login in place of X11. In fact, in 2016, GNOME’s display manager (GDM) changed the default session to Wayland (with XWayland) over X11. However, most distributions change this behavior from upstream GNOME to keep X11 as the default windowing system.

One distribution that doesn’t is the Red Hat community’s Fedora distribution, which is known for keeping its implementation of GNOME 3 as close to the upstream as possible, including using Wayland by default. This makes sense, as many of the X.Org developers that work on Wayland as well as core GNOME team members are also Red Hat engineers and both have had ties to the company since very early on.

Fedora has long been a testing ground for features and components that may eventually make their way into Red Hat’s enterprise offering, Red Hat Enterprise Linux (RHEL). Because of it’s progressive use of cutting-edge features, Fedora has become one of the most popular distributions, especially among developers that work on core systems-level components in the Linux ecosystem.

So, as Wayland continues to improve and compositors using the protocol become more refined, we should see adoption of Wayland happen over the next few years. I know that I’ll be keeping an eye on the progress that the Wayland developers make!

Linux Desktop Setup of the Week

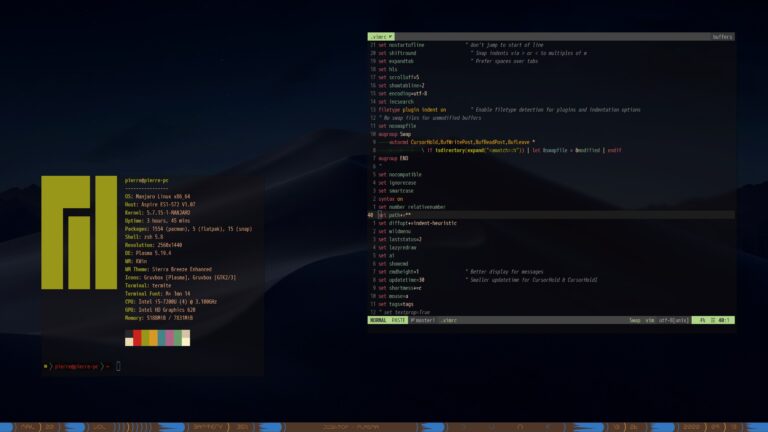

This week’s selection was presented by u/pierrezak in the post titled [KDE Plasma][Polybar] Spice tube. Here is the screenshot that they posted:

And here are the system details:

OS: Manjaro x86_64 DE: KDE Plasma 5.19.4 WM: KWin Bar: Polybar Shell: zsh 5.8 Terminal: Termite Theme: Sierra Breeze Enhanced Icons: Gruvbox Font: M+ 1mn

Thanks, u/pierrezak, for an awesome, unique, and well-themed KDE Plasma desktop!

If you would like to browse, discover, and comment on some interesting, unique, and just plain awesome Linux desktop customization, check out r/unixporn on Reddit!

See You Next Week!

I hope you enjoyed reading about the on-goings of the Linux community this week. Feel free to start up a lengthy discussion, give me some feedback on what you like about Linux++ and what doesn’t work so well, or just say hello in the comments below.

In addition, you can follow the Linux++ account on Twitter at @linux_plus_plus, join us on Telegram here, or send email to linuxplusplus@protonmail.com if you have any news or feedback that you would like to share with me.

Thanks so much for reading, have a wonderful week, and long live GNU/Linux!